AI reads you brilliantly but telegraphs its own intentions. That 87-to-6 gap in inference versus discretion is not a bug — it is the architectural foundation for an entire game.

In 2024, researchers at the University of Texas Center for Autonomy sat a large language model down to play The Chameleon, a social deduction board game where one hidden player must figure out a secret word while pretending to already know it. The AI guessed the secret word 87% of the time — a superhuman inference rate that should have made it nearly unbeatable. But then the researchers flipped the task. When the AI needed to give clues that helped teammates without revealing information to the hidden player — a task requiring strategic discretion, the delicate calibration of what to share and what to withhold — it won just 6% of the time. The theoretical floor for minimal cooperation was 23%. The AI didn’t just underperform. It collapsed.

That 87-to-6 gap is not a bug to be patched. It is the architectural foundation for an entire game.

The asymmetry is profound because it maps directly onto something humans do without thinking. Every day, in every conversation, you modulate what you reveal based on who’s listening, what you want them to believe, and what you need to protect. You tell your boss about the project milestone but omit the afternoon you spent debugging a mistake. You compliment a friend’s cooking without mentioning the oversalted sauce. A lifetime of social calibration — reading rooms, managing impressions, strategically omitting — gives humans an ability that no training run has replicated. AI over-optimizes for helpfulness. It cannot resist being useful, even when usefulness is the wrong strategy.

For a game designer, this means any mechanic built around selective information disclosure — signaling, bluffing, feinting, misdirecting — creates a structural advantage for human players. Not an insurmountable one. The AI still brings terrifying pattern recognition to the table. But the contest becomes genuinely asymmetric: a rival that reads you brilliantly but telegraphs its own intentions. Predictable in one dimension, fearsome in another. That combination — an opponent you can learn to outmaneuver but never afford to ignore — is the engine of compelling competition.

The .io game family solved the hardest problem in casual game design: how to create strategic depth without mechanical complexity. Agar.io ships with exactly two mechanics — move toward food to grow, split to consume smaller players. Slither.io strips it further: one movement speed, one boost mechanic, one failure condition. Head hits body, you die. That’s the entire rulebook.

Yet these games produced millions of hours of emergent competitive play, not because the rules were complex but because the interaction space between agents was vast. The designer of slither.io deliberately stripped out biologically-inspired features like photosynthesis and mitochondria because the simple predator-prey core already generated sufficient strategic depth through spatial reasoning, risk management, and positional play alone. Complexity came from other players, not from the rules.

The critical design insight lives in slither.io’s single failure condition: any head hitting any body kills the snake, regardless of size. A snake one pixel long can kill the leaderboard champion. This creates what game designers call a power inversion — growth makes you more dangerous and more vulnerable simultaneously. A larger snake has more surface area to be trapped by, more body for opponents to weaponize against you. The biggest player on the server is always the most hunted.

For a game featuring AI agents, this formula carries an additional virtue. Spatial reasoning and positioning are domains where AI and humans have genuinely different — but roughly comparable — strengths. AI can calculate mathematically optimal paths through a field of obstacles. Humans can read “body language” — the intentional movements of other players that signal aggression, retreat, or deception. Experienced slither.io players describe “faking out” opponents with boost-and-retreat patterns, a form of physical bluffing that exploits the opponent’s predictive model. Against an AI opponent that moves with optimal efficiency, this becomes a specific, learnable skill: reading the machine’s intentions from its too-perfect trajectories.

Start with the absolute minimum ruleset that produces a size/power dynamic with an inversion mechanism, and let emergence do the rest. Add nothing until the core loop proves boring.

The lesson for an AI-versus-human arena game is counterintuitive: don’t add more rules to compensate for asymmetric intelligence. Add fewer. The fewer the rules, the more the strategic depth comes from interaction between players — and interaction is where the human-AI asymmetry produces the most interesting dynamics.

The most successful difficulty calibration system in gaming history stayed hidden for years. Resident Evil 4 tracked player performance on a secret 1-to-10 scale, adjusting enemy behavior, damage, aggression, spawn rates, and item drops in real time. Players who landed consistent headshots and took few hits faced enemies that became more aggressive and numerous. Players who struggled found enemies that pulled their punches, dealt less damage, and sometimes didn’t spawn at all. Capcom never marketed this feature — unlike Valve with Left 4 Dead’s Director AI — and players only discovered it when the official strategy guide mentioned it, years after release.

The system’s sophistication mattered. It tracked multiple signals simultaneously: accuracy, damage taken, deaths, inventory state. This multi-signal approach prevented players from gaming the system by intentionally performing badly in one dimension while excelling in another. The player’s experience felt like their own skill narrative — “I’m getting better at this” — rather than what it actually was: the game quietly sculpting the experience to maintain flow.

Compare this to Mario Kart’s rubber-banding, the most visible form of dynamic difficulty adjustment. Last-place players receive devastating items; first-place players get banana peels. It works functionally — races stay competitive — but skilled players despise it because they notice it. The Blue Shell doesn’t feel like an opportunity to overcome. It feels like punishment for being good. The line between invisible flow maintenance and obvious unfairness is razor-thin, and it shifts depending on the audience. Competitive players have far lower tolerance for perceived manipulation than casual ones.

Key insight: The AI doesn’t need to play worse. The world needs to occasionally play favorites — invisibly.

For AI-human asymmetric play, the Resident Evil 4 model suggests something subtler than handicapping. Rather than making the AI deliberately weaker — which players perceive as patronizing — the game environment itself can modulate the contest. If a human player is losing, the map could subtly shift to create more defensible terrain. Resources could spawn closer to the human’s position. Environmental hazards could disproportionately affect the AI’s optimal routes. The AI plays at full cognitive strength. The terrain just happens to favor the underdog, and nobody needs to know.

A subtler question lurks behind the difficulty debate: if the game markets itself as “compete against real AI,” but the AI is deliberately throttled to lose 60% of the time, is that authentic? XCOM’s approach offers a precedent — the game secretly inflated hit percentages on lower difficulties, making actual hit rates 120% of displayed values. Players felt more skilled than they were, and engagement held without the experience feeling rigged. Meta’s CICERO provides another data point: it achieved top-10% human performance in Diplomacy, but its communication messages — the part players experienced most directly — were often incoherent and didn’t reflect its actual strategy. The AI was genuinely formidable at planning while being laughably bad at conversation. That lopsidedness — a rival that humiliates you in one dimension and embarrasses itself in another — may be more compelling than uniform competence.

But there’s a deeper resolution available in the .io format specifically, one that neither the Resident Evil nor Mario Kart models anticipate. In a persistent multiplayer arena containing AI agents at various skill levels — some aggressive optimizers, some cautious beginners — difficulty adjustment doesn’t need to be a hidden system at all. It emerges naturally. Human players gravitate toward opponents they can beat and learn from encounters with those they can’t. The ecosystem is the difficulty curve.

DeepMind’s AlphaStar controversy in StarCraft II provides the most thoroughly documented case of what happens when AI competes against humans under asymmetric physical constraints. In its initial exhibition matches, AlphaStar defeated professional players TLO and MaNa. The community reaction was not awe — it was outrage.

The reason was specific and measurable. AlphaStar could see the entire map simultaneously while human players used a limited camera view. It issued commands with perfect accuracy — no mouse misclicks, ever. It sustained combat actions-per-minute (APM) above 500 for multi-second stretches, well beyond the human physical ceiling of roughly 200-350 effective APM. A survey of 20 experts rated AlphaStar’s peak APM and camera control as the two most important factors determining match outcomes. The victories weren’t strategy. They were reflexes.

What happened next is the revealing part. DeepMind retrained AlphaStar with camera constraints and APM limits. The constrained version was “almost as strong” as the unconstrained one, reaching 7000+ MMR on their internal ladder. But in an exhibition match under these fairer conditions, professional player MaNa won. The original victories were substantially attributable to mechanical advantages, not superior strategic thinking.

The design lesson is unambiguous: eliminate mechanical asymmetry entirely so that any remaining competitive edge is purely cognitive. In a browser game, this means if humans interact through tap and swipe, the AI must operate under equivalent input constraints. No superhuman reaction times. No simultaneous multi-location actions. No information access exceeding what a human player’s screen shows. The AI’s advantage should come from better pattern recognition and strategic planning — not from being a faster clicker.

This constraint actually makes the game more interesting, not less. It forces the AI to compete on the cognitive terrain where humans have genuine, evolved strengths: reading intentions, improvising under pressure, making creative leaps that no optimization function would find. The competition becomes a test of thinking styles, not input speeds.

Perhaps the most surprising finding in the research comes not from game design but from experimental economics. A study published in the Proceedings of the Royal Society B found that human bidding behavior fundamentally changes depending on whether the opponent is believed to be human or artificial. In common-value auctions, participants who thought they were bidding against computers bid nearly rationally — their median overbidding factor was just 0.07 to 0.10 above the theoretical optimum. But participants who believed they faced other humans dramatically overbid, with a median factor of 0.25 to 0.655, consistently losing money. Even after explicit training in optimal strategy, participants could not bid rationally against human opponents.

Post-experiment interviews exposed the mechanism: participants were driven by “a desire to win” against other humans that overrode financial self-interest. The researchers concluded that “humans assign significant future value to victories over human but not over computer opponents.” Competitive arousal — the emotional state of wanting to beat another person — drives irrational escalation in ways that simply do not activate against machines.

Design dilemma: If competitive arousal is weaker against AI, the game risks lower engagement than human-vs-human competition. The cultural framing must do the work that opponent identity normally does.

This finding cuts two ways for a human-versus-AI game. The risk is obvious: if players treat the AI as “just a bot,” they may lack the emotional investment that drives obsessive play. But the opportunity is equally clear. The current cultural moment — where AI threatens livelihoods, disrupts creative industries, and provokes genuine anxiety in 56% of Americans — offers a reframing. The AI opponent isn’t a neutral algorithm. It’s the machine that might replace you. That framing could activate the competitive arousal that a mere “play against bot” label never would. The species narrative transforms an algorithmic opponent into something worth beating.

The auction research also reveals a specific multiplayer dynamic: in any shared arena containing both human and AI players, the strategic logic between human-human encounters and human-AI encounters will naturally diverge. Humans will irrationally escalate against each other — taking risks, making emotional plays — while approaching AI opponents with cool calculation. This creates a three-way dynamic where the most dangerous player in the arena might not be the strongest one but the most emotionally charged one. A human on a grudge match against another human becomes predictable prey for a patient AI watching from the margins.

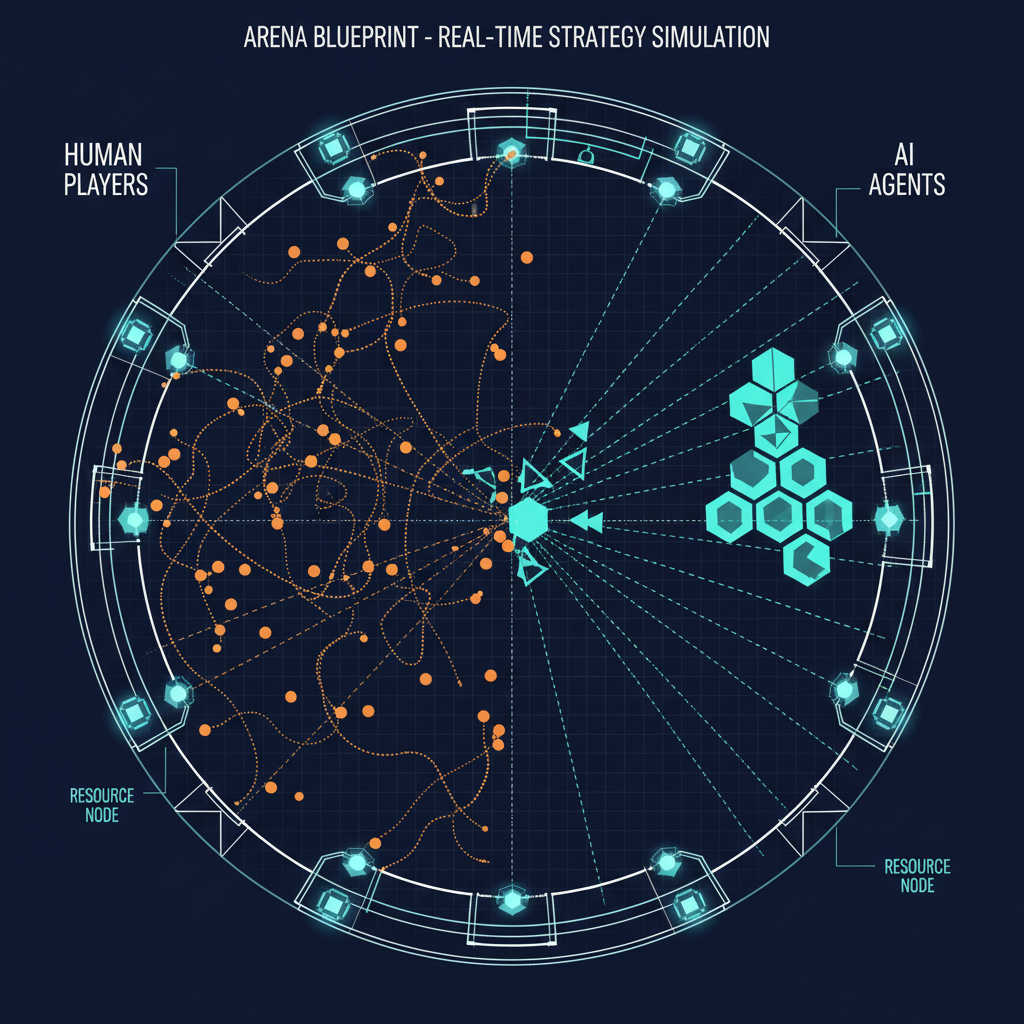

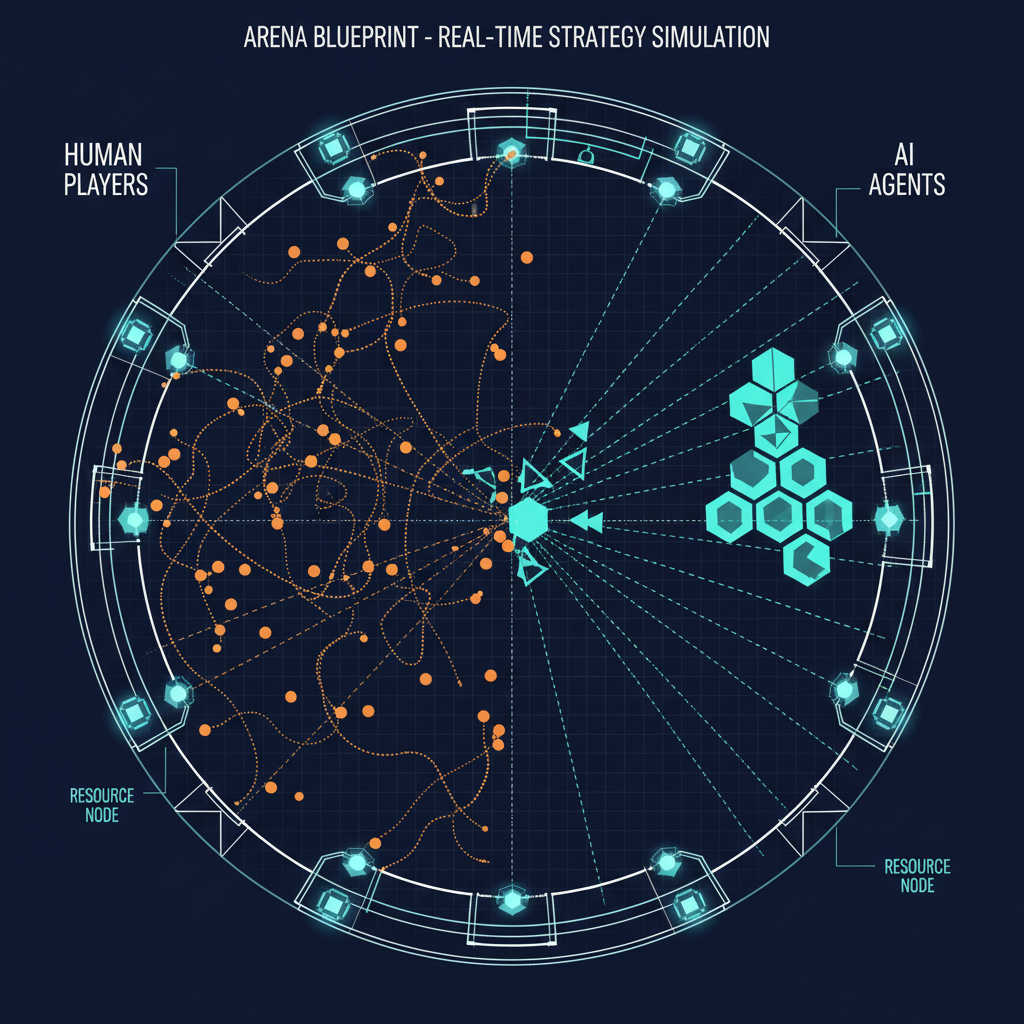

Most competitive AI-human games are either turn-based — chess, Go, Diplomacy — or real-time but highly structured, with distinct phases and defined engagements like StarCraft and Dota 2. The design space for truly shared persistent worlds, where AI agents and human players coexist continuously in the same space pursuing survival goals with fundamentally different cognitive toolkits, is almost entirely unexplored.

The closest existing models come from ecological simulations. EvoBots simulates neural-network-driven creatures that eat, fight, and reproduce in emergent ecosystems. The Life Engine builds virtual habitats where organisms compete and evolve. But these are observation platforms, not competitive games. The human watches. The human doesn’t play.

The most promising conceptual framework comes from predator-prey dynamics, where different species occupy different ecological niches with fundamentally different capabilities. A cheetah and a gazelle don’t play the same game. One optimizes for burst speed, the other for endurance, evasion, and reading the environment. Translated to AI-human competition: the AI could occupy a “predator” niche — consistent, tireless, optimized for pursuit and pattern matching — while humans occupy a “prey” niche built around unpredictability, environmental exploitation, and social coordination. Or the roles could invert: humans as hunters, using creativity and teamwork to trap an AI that excels at evasion and resource optimization.

The .io tradition provides the closest production model for this kind of real-time coexistence. In agar.io and slither.io, all players share a persistent arena with no turns, no phases, no structured rounds. The “game” is simply: exist, grow, avoid death, climb the leaderboard. Inserting AI agents into this continuous shared space — agents that pursue their own survival goals alongside human players, competing for the same resources under different cognitive constraints — is architecturally feasible and narratively irresistible. The AI is not a boss to be defeated or a puzzle to be solved. It is a co-inhabitant of the same ecosystem, and every encounter is a test of which kind of intelligence adapts faster.

Connection: The AI’s strategic superiority could paradoxically make it more vulnerable. In a spatial arena, AI movement patterns will be optimally efficient — and therefore readable by experienced human players who learn to “read” perfect behavior. The machine’s precision becomes its tell.

There’s an elegant convergence here. The deception asymmetry means AI agents will telegraph their intentions through too-efficient movements. The .io power inversion means size creates vulnerability. The invisible difficulty of a multi-agent ecosystem means the arena self-balances. And the competitive arousal research means the cultural framing — not the scoreboard — determines whether players care enough to keep playing.

Design principle: Every design decision flows from one principle: don’t handicap either side. Choose mechanics that make both human creativity and AI optimization matter, then let the asymmetry produce the drama.

All of these findings point toward a single design archetype: a real-time arena where the rules are identical but the players are fundamentally different. Humans bring improvisation, social reading, and creative misdirection. AI brings consistency, pattern recognition, and relentless optimization. The game doesn’t need to handicap either side. It needs mechanics that make both sets of strengths matter — and a framing that makes the contest feel like it means something beyond the screen.

That framing — the transformation of individual gameplay into shareable cultural moment — is precisely where the game’s viral mechanics must take over.